05 Jun 2023

Min Read

All About Streaming Data Mesh

The Current State of Streaming Data

The adoption of Streaming data technologies has grown exponentially over the past 5 years. Every industry understands the importance of accessing and understanding data in real-time, so they can make decisions about their products, services and customers. The sooner you can access the event - could be a customer purchasing an item or an IoT device relaying data - the faster you can process and react to it. Making all this happen in real-time is where technologies like Apache Kafka, AWS Kinesis, Google Pub-Sub, Apache Flink and many more come into play. Most of these are available as a managed service, making it easy for companies to adopt them. For example,Confluent and AWS offer Apache Kafka as a managed service.

With rapid adoption of these services, many companies now have access to real-time data.

By their very nature technologies like Apache Kafka interact with multiple types of data producers and consumers. While Datalakes and Data Warehouses meant storing large amounts of data in centralized repositories, data streaming technologies have always promoted decentralization of services and the data behind them. As data sets transit these systems, being able to manage, access and self-provision data is extremely important. This is where Data Mesh comes into the picture.

What is a Data Mesh?

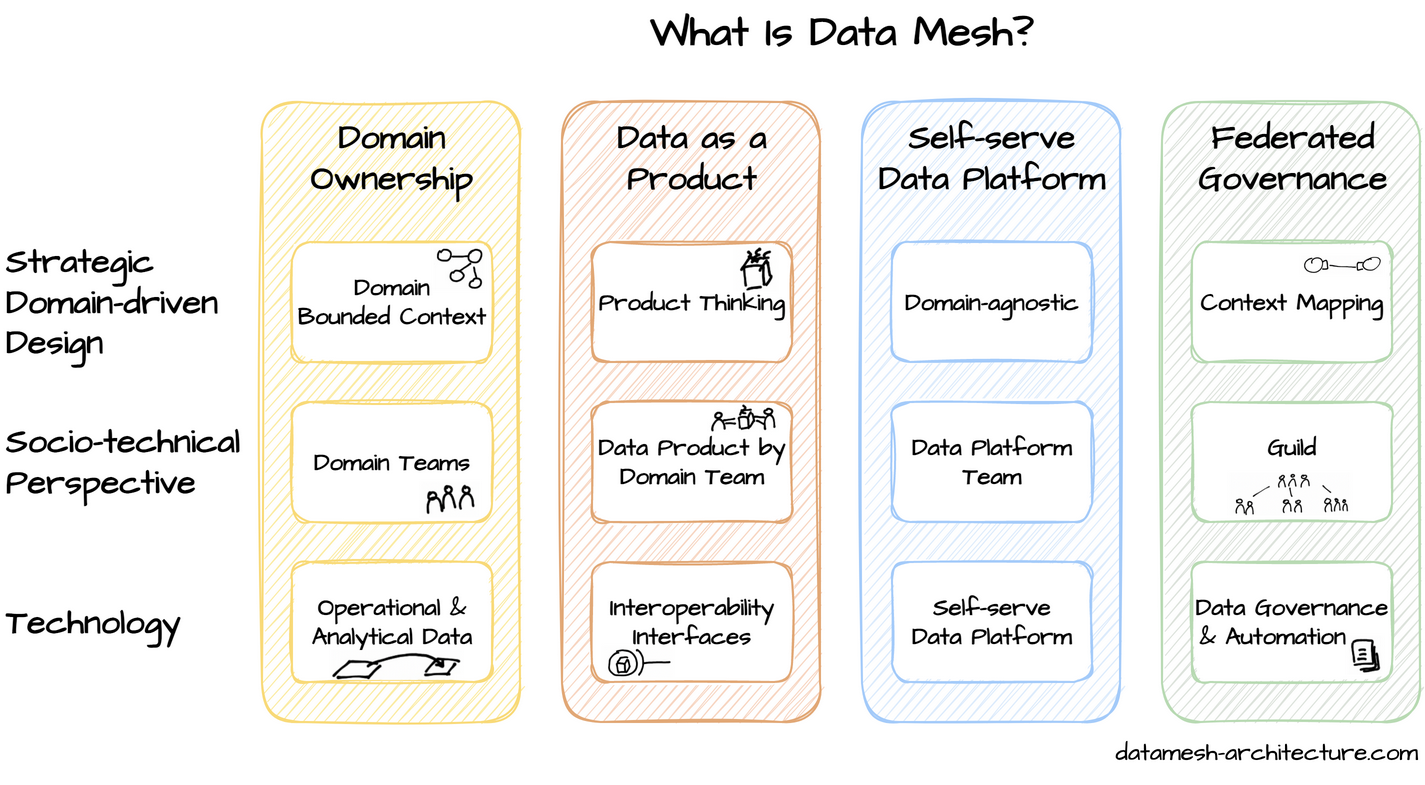

Data Mesh, as first defined by Zhamak Dehghani, is a modern data management architecture that facilitates and promotes a decentralized way to manage, access and share data. The four core principles of a data mesh are :

- Domain ownership

- Data as a product

- Self-serve data platform

- Federated computational governance

Pic from : datamesh-architecture.com

A Data Mesh gives you the agility to work with the data that flows in and out of complex systems across multiple organizations. It reduces dependencies and removes bottlenecks that arise while accessing data; thereby improving the organization's ability to respond and make business decisions.

While there are tools and technologies that can help you build a Data Mesh over “Data at Rest”, it isn’t the same with “Data in Motion”. This is where DeltaStream comes into the picture, to enable organizations develop a Data Mesh over and across all their streaming data - which could span multiple platforms and multiple public cloud providers (Apache Kafka, Confluent Cloud, Kinesis, Pub-Sub, RedPanda .. etc)

Streaming Data Mesh with DeltaStream

What we have built at DeltaStream is an extremely powerful framework which will provide a single platform to access your operational and analytical data, in real-time, across your entire organization in a decentralized manner. No more expensive pipelines or duplicating your data or building central teams to manage Datalakes / Data Warehouses.

Let’s revisit the core tenets of Data Mesh and how DeltaStream helps you achieve each one of those and more.

Domain Ownership

- In DeltaStream, the data boundaries are clearly defined using our namespaces & RBAC. Each stream is isolated with strict access control and any query against that stream inherits the same permissions and boundaries. This way, your core data sets remain under your purview and you can control what others can access. The queries are isolated at the infrastructure level, which means queries are scaled independently, reducing cost and operational complexity. With all these controls in place, the core objective of decentralized ownership of data streams is achieved while keeping your data assets secure.

Data as a Product

- Making data discoverable and usable while maintaining the quality is central to serving data as a product to your internal teams. Being able to do it as close to the source as possible is extremely beneficial and in-line with the data mesh philosophy of domain owners owning the quality of data. This is exactly what you can achieve with DeltaStream. Using our powerful SQL interface you can quickly alter and enrich your data and get it ready-to-use in real-time. As your data models change, DeltaStreams Data Catalog along with Schema registry can track your data evolution and help you iterate on your data assets as you grow and evolve.

Self-serve Data Platform

- Once you achieve “Domain ownership” and “Data as a Product”, self-service follows. The main objective of self-service is to remove the need for a centralized team to coordinate data distribution across the entire company. Leveraging DeltaStream’s catalog you can make your high quality data streams discoverable and combining this with our RBAC you can securely share them, with both internal and external users. This means, the data will continuously flow from its source systems to end-users without ever having the need to store, extract or load. This is very powerful in the way that you get to securely democratize “streaming data” across your company, while having the ability to share it with users that are outside of your Organization too.

Federated Computational Governance

- With everything decentralized - from data ownership to data delivery - governance becomes a very important requirement. This is to ensure that data originating in a particular domain is consumable in any part of the organization. Schemas and schema registry go a long way in ensuring that. Data, just like the organization it serves, changes and evolves over time. Integrating your data streams with schema registry is critical to maintaining a common language to communicate. Also, interoperability is a key component of governance and the ability of Deltastream to operate across multiple streaming stores is a great capability.

Conclusion

Decentralization of data ownership and its delivery is critical for organizations to be nimble. As Data Mesh gains traction, there are tools and technologies readily available to implement it over your data at rest. But the same can’t be said when you are dealing with streaming data. This is what Deltastream provides. With tooling built around the core processing framework, it enables companies to implement data mesh on top of streaming data.

As streaming data continues its rapid growth, it is extremely important for organizations to set-up the right foundations to maximize the value of their real-time data. While architectures like DataMesh provide the right framework, platforms like DeltaStream offer a lot to bring it all together.

We offer a free trial of DeltaStream that you can sign up for start using DeltaStream today. If you have any questions, please feel free to reach out to us.