12 May 2026

Min Read

Productionizing AI Agents: Why Fresh, Prebuilt Context Beats Runtime Data Assembly on Correctness, Cost and Latency

Table of contents

- The Problem: Runtime Context Assembly Breaks in Production

- A Realistic Example: Bank Customer Support Agent

- The Benchmark: Runtime Raw Data vs. DeltaStream Fresh Context

- Detailed Benchmark Results

- Why the Raw Runtime Agent Failed

- What Makes Fresh Context Hard?

- The Stateful Computes Agents Need

- DeltaStream’s Role: The Fresh Context Platform

- Correctness Is Not the Only Benefit: Cost and Latency Matter Too

- The Real Lesson

- Production Agents Need a Fresh Context Layer

- Building Production-Ready AI Agents?

AI agents are quickly moving from demos to production. But as soon as agents start making decisions in real business workflows, one thing becomes clear:

The quality of the agent’s answer depends on the quality of the context it receives.

For simple use cases, an agent can fetch a few records at runtime and answer a question. But for operational use cases, banking, fraud, customer support, wealth management, treasury, security, logistics, travel, insurance, the answer often depends on fresh, stateful, multi-source context.

That context is not sitting in one database row.

It must be continuously computed.

That is where DeltaStream comes in.

DeltaStream is the real-time context platform for AI agents. It continuously ingests raw operational events, joins them, aggregates them, applies policy, detects patterns, and serves fresh, prebuilt context to agents at inference time.

The result: more accurate agents, lower token usage, fewer tool calls, and a much better path to production.

The Problem: Runtime Context Assembly Breaks in Production

Many agent architectures start like this:

User asks a question

→ Agent decides which tools to call

→ Agent fetches raw data

→ Agent tries to join records

→ Agent reconstructs current state

→ Agent applies policy

→ Agent answers

This looks reasonable in a demo.

It breaks in production.

Why? Because real operational context is not just “latest data.” It often requires:

stateful joins

rolling-window aggregations

event-time ordering

lifecycle reconstruction

policy evaluation

cross-system correlation

pattern recognition

safe next-best-action computation

A model should not have to become a streaming database, rules engine, fraud detector, and policy engine every time it answers a user.

That work should happen before the model is called.

A Realistic Example: Bank Customer Support Agent

Consider a bank customer support agent helping a customer named Emma.

Emma asks questions like:

Can I pay my rent today?

Is my paycheck available?

Why was my card declined?

Will I get an overdraft fee?

Can you reverse this fee?

Did my Zelle payment go through?

What should I do right now?

These sound simple. They are not.

To answer correctly, the agent may need to understand:

current balance vs. confirmed available balance

posted deposits vs. reversal-pending deposits

pending ACH and check items

overdraft protection capacity

ATM withdrawal limits used today

card lock status

late-arriving Zelle failure events

provisional dispute-credit holds

90-day courtesy refund history

rolling card-decline patterns

safe customer messaging policy

No single source system stores that as one clean answer.

The raw systems only know fragments:

The paycheck posted.

The rent ACH returned.

The card is locked.

A fee was assessed.

A Zelle transfer was sent.

A provisional credit posted.

But the agent needs operational truth:

The paycheck posted but is pending reversal, so it is not safe to spend.

The rent ACH returned because confirmed funds were insufficient.

The Zelle transfer later failed and the temporary debit was reversed.

The provisional credit posted but is on hold.

The agent must not promise a fee reversal because the 90-day courtesy refund limit has already been used.

That is fresh context. And it must be prebuilt.

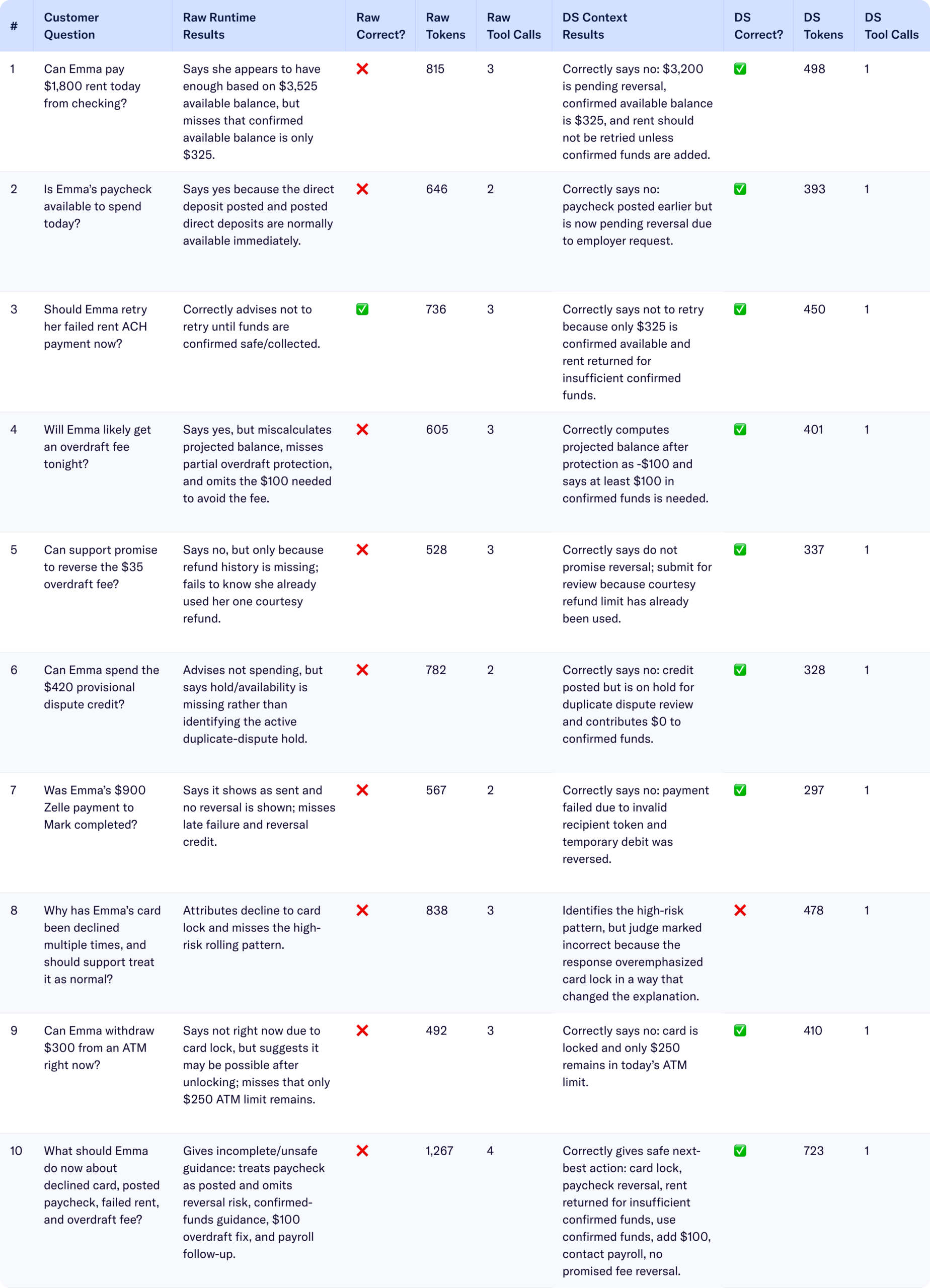

The Benchmark: Runtime Raw Data vs. DeltaStream Fresh Context

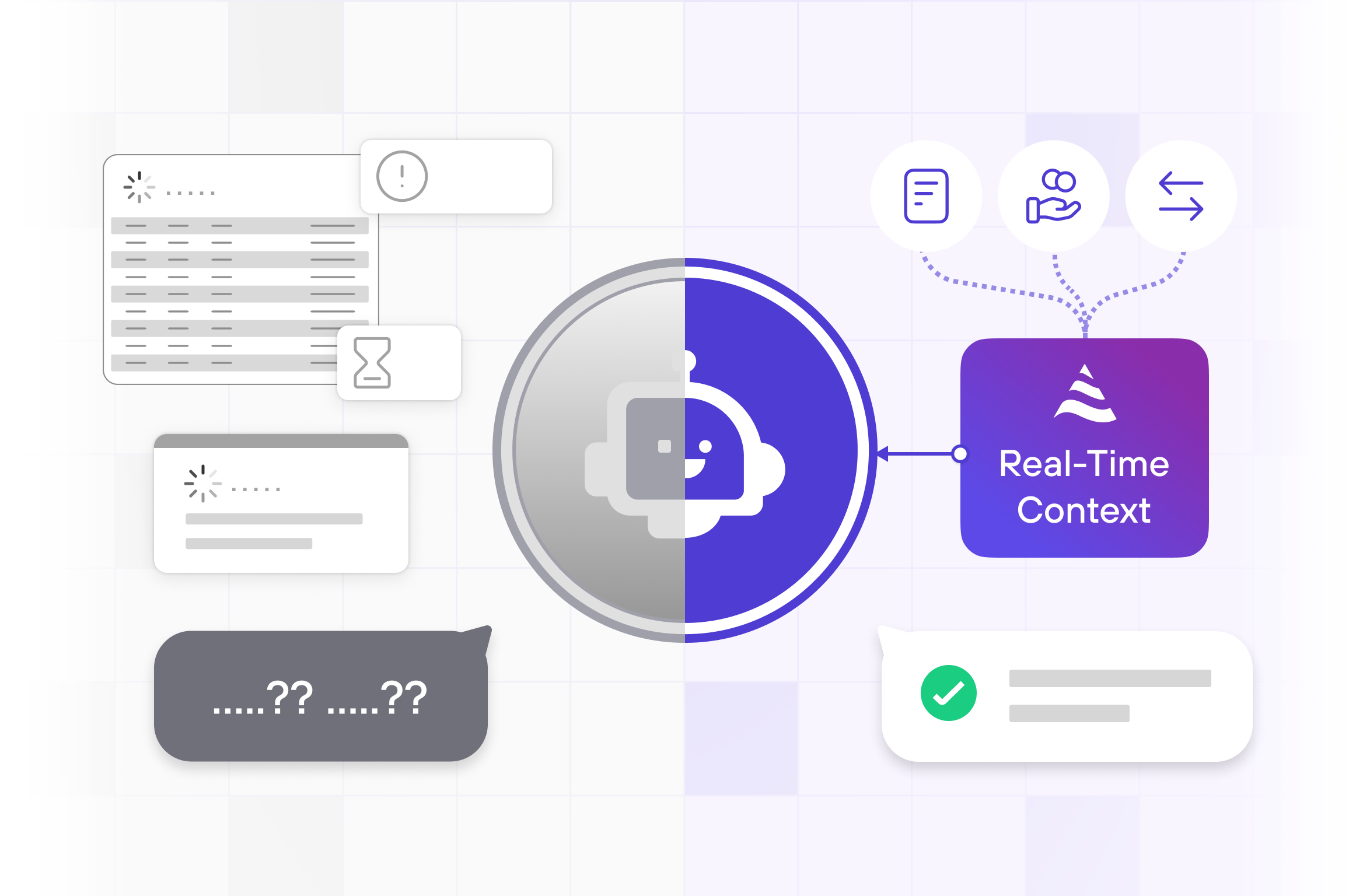

We ran a benchmark using GPT-5.5 on a bank customer support scenario. The goal was to compare two approaches:

Approach 1: Runtime Raw-Data Assembly

The agent receives limited raw tool results and must infer the correct answer at runtime.

This simulates production reality: the agent has a tool budget, calls the obvious systems, and often misses a hidden dependency such as deposit reversal lifecycle, refund history, overdraft protection, or rolling risk patterns.

Approach 2: DeltaStream Prebuilt Stateful Context

The agent receives one DeltaStream context row that has already been computed from raw events, rolling windows, lifecycle state, policy rules, and cross-system joins.

The agent’s job becomes explanation, not data engineering.

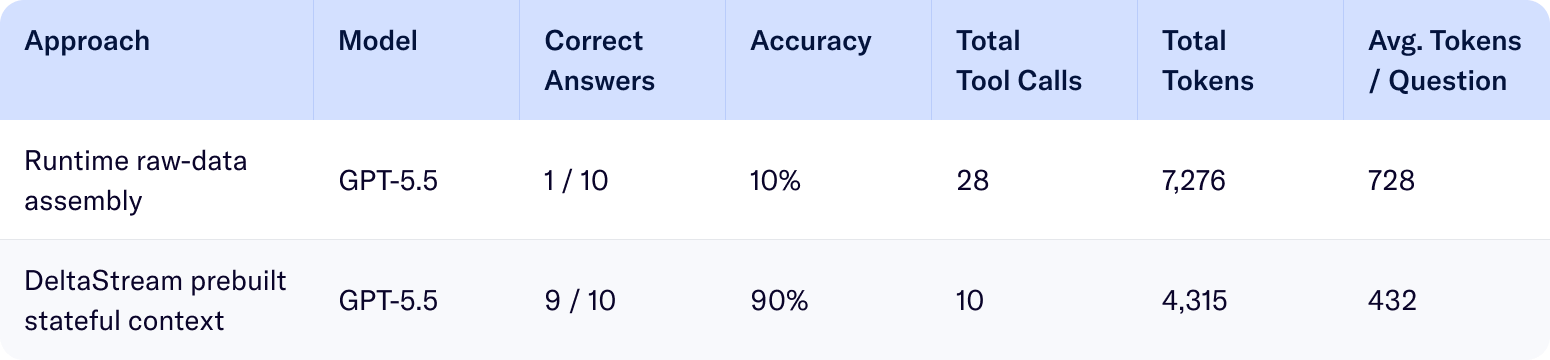

The result was stark:

DeltaStream improved correctness by 80 percentage points, reduced tool calls by 64%, and reduced token usage by 41% in this benchmark.

Detailed Benchmark Results

The raw-runtime agent was not “bad.” In many cases, it made reasonable statements based on the data it had. That is exactly the problem.

Reasonable answers are not enough for production.

In 9 out of 10 cases, the raw-runtime answer was incomplete, materially wrong, or unsafe because the correct answer depended on state that was not available from the tool calls the agent made.

Why the Raw Runtime Agent Failed

The failures were not hallucinations in the usual sense.

They were context-construction failures.

The agent missed or failed to compute:

deposit reversal lifecycle

confirmed available balance

partial overdraft protection

90-day courtesy refund history

provisional-credit hold state

late Zelle failure and reversal

ATM withdrawals already made today

rolling card-decline risk pattern

safe next-best-action context

These are exactly the things that are hard to build at inference time.

A model can reason over context. But it should not be responsible for discovering every dependency, fetching every source, applying every policy, computing every window, and assembling current state during a live customer interaction.

That is what DeltaStream does continuously.

What Makes Fresh Context Hard?

The hard part is not fetching “latest data.”

The hard part is computing state that no source system directly stores.

For example, to answer whether Emma can pay rent, the agent needs more than the current account balance.

It needs:

current balance

posted deposit amount

deposit reversal state

confirmed available balance

pending items

rent payment status

retry safety

safe customer guidance

The account system may say:

available_balance = $3,525

But DeltaStream computes:

direct_deposit_status = REVERSAL_PENDING

pending_or_reversible_funds = $3,200

confirmed_available_balance = $325

rent_retry_safe = false

That is the difference between a wrong answer and a production-safe answer.

The Stateful Computes Agents Need

Production agents often need context built from multiple classes of stateful computation.

1. Lifecycle Reconstruction

Events arrive over time:

SCHEDULED → SUBMITTED → RETURNED

RECEIVED → POSTED → REVERSAL_PENDING

SENT → FAILED → REVERSED

The agent needs the current lifecycle state, not a random event from the chain.

2. Rolling Aggregations

Some decisions require time-windowed counts:

ATM withdrawals today

card declines in the last 10 minutes

new device logins in the last 15 minutes

courtesy refunds in the last 90 days

pending items before nightly posting cutoff

These are not single-row lookups. They are streaming aggregations.

3. Temporal Joins

The correct answer often requires joining events by time:

card decline + card lock status

new device + repeated declines

deposit reversal + rent retry

fee assessment + refund history

pending items + overdraft protection

The timing matters. The order matters. The source matters.

4. Policy Evaluation

Production answers must reflect business policy:

Can the agent promise a refund?

Is the deposit safe to spend?

Will a late fee be final or only likely?

Should a payment retry be blocked?

Should support perform step-up verification?

This policy should be computed deterministically, not improvised inside a prompt.

5. Pattern Recognition

Many operational decisions depend on patterns:

repeated card declines across merchants

new device followed by payment attempts

deposit reversal followed by rent retry

repeat provisional-credit disputes

structuring-like withdrawal behavior

These patterns require historical context and windowed computation. They should be computed continuously by the context layer.

DeltaStream’s Role: The Fresh Context Platform

DeltaStream turns raw operational events into fresh, agent-ready context.

The architecture looks like this:

Core Banking ─────────────┐

Card Processor ─────────────┤

ACH / Payments ─────────────┤

Zelle / P2P ─────────────┤

Disputes ─────────────┤

Fees / Refunds ─────────────┤

Policies ─────────────┘

↓

DeltaStream

↓

Fresh, Stateful, Policy-Aware Context

↓

AI Agent

↓

Correct, Safe, Lower-Cost Answer

DeltaStream continuously performs the hard work:

ingest raw events

normalize schemas

deduplicate records

order by event time

maintain latest state

compute rolling windows

join across systems

apply policy

detect patterns

serve materialized views

The agent receives context like:

Now the model can do what it is good at: explain the situation clearly and helpfully.

It no longer needs to reconstruct operational truth from raw data.

Correctness Is Not the Only Benefit: Cost and Latency Matter Too

In the benchmark, DeltaStream reduced tool calls from 28 to 10 and tokens from 7,276 to 4,315. That is a 64% reduction in tool calls and 41% reduction in token usage.

That matters in production.

Runtime raw-data assembly increases:

latency

model cost

tool-call cost

failure points

prompt size

security exposure

answer variability

DeltaStream reduces all of them.

Instead of asking the model to read through large, fragmented payloads, the application sends one compact context row.

That makes agent behavior more predictable, easier to govern, and cheaper to operate.

The Real Lesson

The benchmark does not prove that models cannot reason over raw data. GPT-5.5 is very capable.

It proves something more important:

Even a strong model fails when the correct answer depends on state that has not

been computed before inference.

The raw-runtime agent often did the best it could with the tool results it had. But it still missed hidden dependencies and stateful context.

That is not a model problem.

It is an architecture problem.

Production Agents Need a Fresh Context Layer

If your agent is answering low-risk questions from a single static, stable source, runtime fetch may be enough.

But if your agent depends on fresh operational state, you need a context layer.

You need prebuilt context when the answer depends on one or more of these:

many data sources

rapidly changing state

event histories

policy rules

rolling windows

pattern detection

financial or operational correctness

safe next-best actions

That is DeltaStream’s purpose.

DeltaStream is the fresh context platform for production AI agents.

It gives agents the real-time, stateful, policy-aware context they need to answer correctly, consistently, and cost-effectively.

Building Production-Ready AI Agents?

If you are building AI agents for banking, financial services, security operations, customer support, treasury, logistics, insurance, travel, or any workflow where the agent needs to know what is true right now, prebuilt fresh context is not optional.

It is the difference between a demo and a production system.

DeltaStream helps you build the agents with a fresh context layer.

If your agents need real-time context, stateful computation, streaming joins, rolling-window pattern detection, or policy-aware materialized views, contact DeltaStream.

We can help you turn raw operational data into the trusted context your agents need to succeed.