10 Nov 2025

Min Read

Two Paths to Context: When GenAI Agents Need a Real-Time Context Engine like DeltaStream — and When They Don’t

Table of contents

- Two Approaches to Building Agent Context

- Approach 1: Real-Time Context Engine (RTCE)

- Approach 2: Fetch-on-Demand (MCP/Tool-Based Context Assembly)

- Comparing the Two Architectures

- Governance and Access Control — The Hidden Cost of the Ad-Hoc Model

- When a Real-Time Context Engine like DeltaStream Is Essential

- 1. High-Velocity or Event-Driven Domains

- 2. Multi-Agent or Shared Context Environments

- 3. Real-Time Decisioning and Action Agents

- 4. Context Fusion from Many Asynchronous Sources

- 5. Strict Consistency, Audit, and Governance Requirements

- When an RTCE Is Overkill

- The Recommendation

- The Hybrid Future

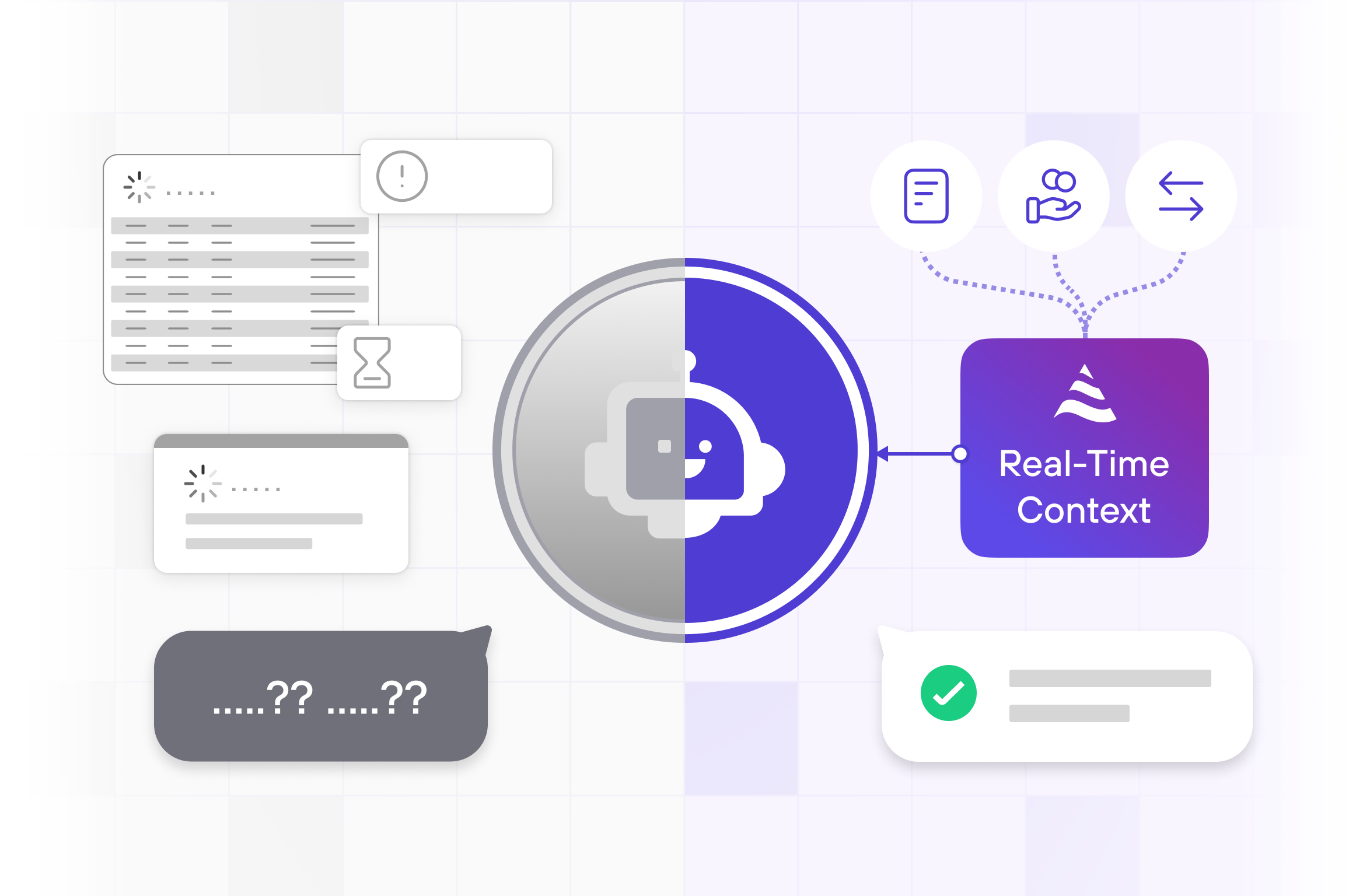

Every intelligent agent depends on context. Whether it’s answering a customer query, approving a loan, or detecting fraud, an agent’s value lies in its ability to understand what’s true right now.

But there are two fundamentally different ways to give an agent that context:

- Build a continuously updated real-time materialized view — what DeltaStream enables as a Real-Time Context Engine (RTCE).

- Fetch and assemble context on demand — querying live systems or tools (via MCP or function calls) whenever the agent needs to think.

Both patterns work. But they differ dramatically in latency, accuracy, cost, and complexity. Let’s unpack the trade-offs — and figure out when DeltaStream’s RTCE is essential and when it’s overkill.

Two Approaches to Building Agent Context

Approach 1: Real-Time Context Engine (RTCE)

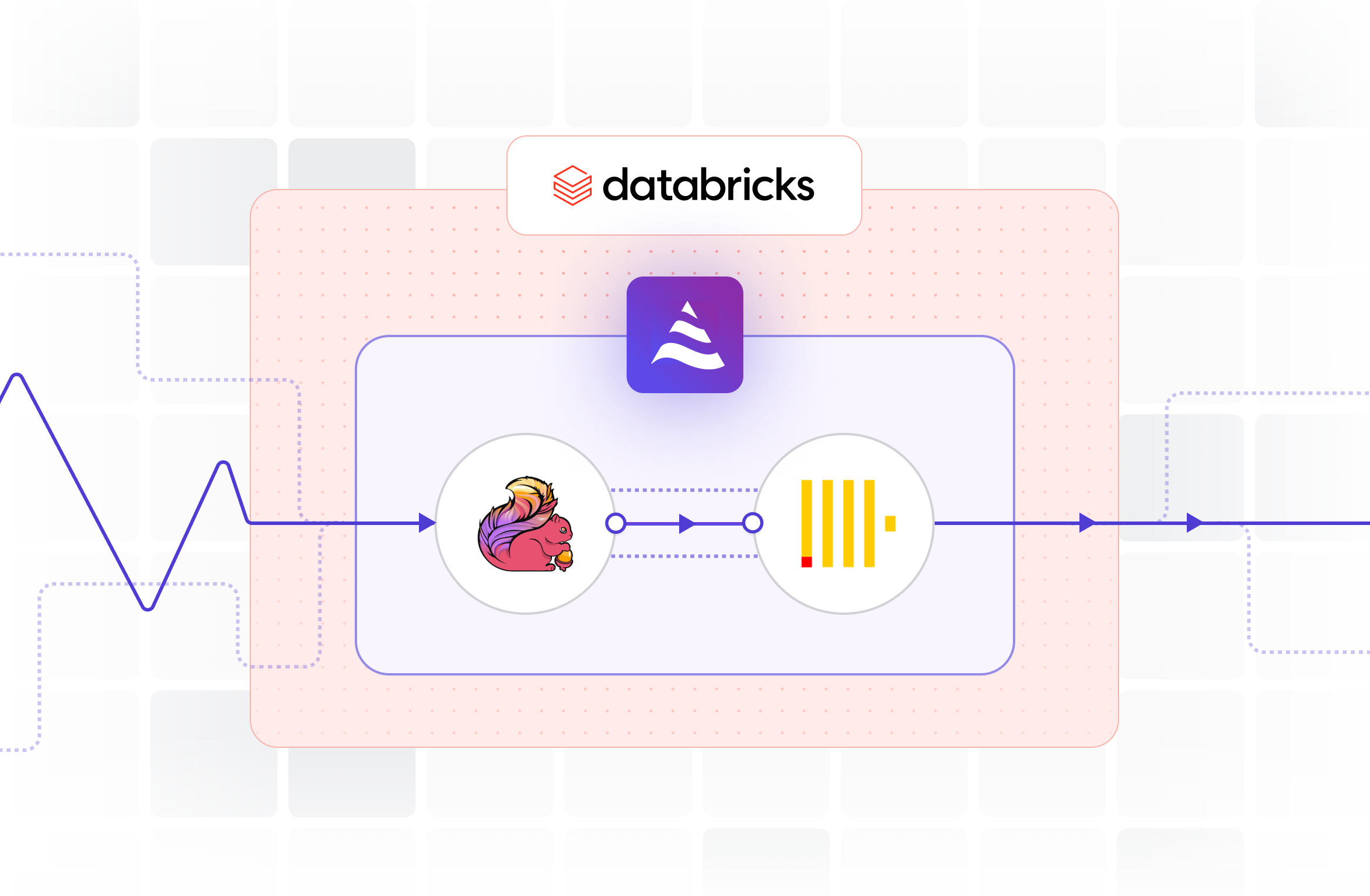

In this model, you continuously ingest and fuse data from multiple systems — databases, APIs, streams, logs — into a live materialized view.

That fused state is always fresh, queryable in milliseconds, and acts as the single source of real-time truth for agents.

DeltaStream provides this layer:

- It subscribes to upstream streams (Kafka, CDC, APIs).

- It continuously joins, filters, and aggregates the latest facts.

- It exposes a unified, low-latency context endpoint as MCP tools to agents.

Example:

A “Trading Assistant” agent that monitors live market data, user portfolios, and risk positions. DeltaStream fuses those feeds into a real-time portfolio view. When the agent is prompted, it already has the current context — no per-query fetch or recomputation.

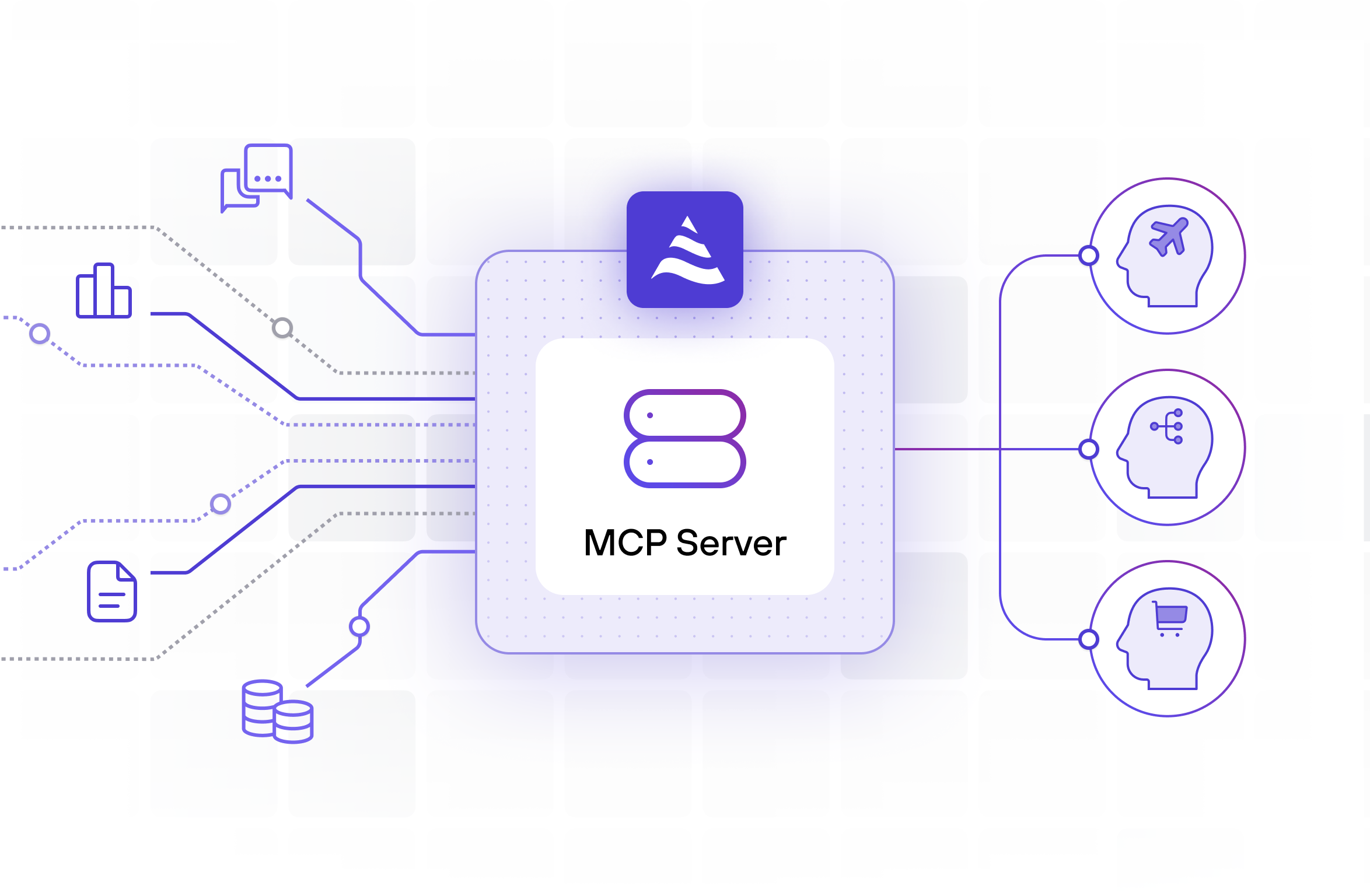

Approach 2: Fetch-on-Demand (MCP/Tool-Based Context Assembly)

Here, the agent doesn’t maintain any live context. Instead, at runtime, it uses tools (via the Model Context Protocol, APIs, or direct database calls) to fetch whatever data it needs, then combines it in-memory before reasoning.

Example:

A “Support Agent” that needs to check a user’s order status. The LLM calls:

get_order_status(user_id)get_payment_info(user_id)get_shipping_status(order_id)

The agent merges these responses, constructs the context, and prompts itself with it.

No continuous updates, no persistent state — everything happens ad hoc.

Comparing the Two Architectures

Governance and Access Control — The Hidden Cost of the Ad-Hoc Model

One of the least discussed — but most serious — weaknesses of the fetch-on-demand approach is governance.

In enterprise environments, each agent call that fetches live data becomes a mini-integration point:

- Multiple credential sets (API keys, OAuth tokens) live in the agent runtime.

- Each call bypasses central visibility and often lacks audit trails.

- Data lineage — which data was used to make a decision — is fragmented across tool logs.

This creates problems in three dimensions:

- Security Exposure:

Each tool call requires authentication to different back-end systems. Scaling to 10+ tools per agent means dozens of live credentials circulating through model runtimes — a nightmare to manage securely. - Policy Drift:

If each agent individually decides who can access what, access rules diverge. There’s no central place to enforce “who can see which column” or “what data leaves which boundary.” - Audit and Compliance Gaps:

When an auditor asks, “What data did this agent use to approve this trade?” — you can’t reconstruct the exact snapshot. The data was fetched on the fly and discarded.

By contrast, DeltaStream’s RTCE acts as a controlled gateway layer:

- Access control is enforced at the query level (row/column policies, RBAC).

- Every contextual view is versioned and timestamped.

- Data lineage is auditable and centrally logged.

⚖️ In regulated industries — finance, healthcare, insurance, public sector — this alone can justify and necessitate the RTCE.

When a Real-Time Context Engine like DeltaStream Is Essential

1. High-Velocity or Event-Driven Domains

If your environment changes faster than an agent can query — markets, fraud, IoT, logistics — the only reliable way to maintain correctness is to stream updates continuously.

Example:

A fraud-detection agent correlating live transactions, login patterns, and device telemetry. Fetching each source at runtime would be too slow — by the time the agent has context, the fraud is gone.

DeltaStream’s RTCE ensures context is up-to-date at all times.

2. Multi-Agent or Shared Context Environments

If multiple agents (risk, compliance, support) need to see the same current state, a shared RTCE avoids duplication and ensures consistency.

Without DeltaStream, each agent might assemble slightly different context snapshots, leading to divergent actions.

3. Real-Time Decisioning and Action Agents

When the agent must act in real time — adjust a price, route an alert, trigger a mitigation — latency is non-negotiable.

Continuous materialization keeps context available for instant decisioning.

4. Context Fusion from Many Asynchronous Sources

If the required context spans multiple APIs or systems with different update cadences, building a pre-joined real-time view is far more efficient than orchestrating dozens of API calls at runtime.

5. Strict Consistency, Audit, and Governance Requirements

Financial, healthcare, and regulated environments often require that all decisions derive from the same contextual snapshot.

An RTCE provides a timestamped, versioned view that’s auditable and replayable — something ad hoc API fetching can’t guarantee.

When an RTCE Is Overkill

- Low-Frequency Context Changes

- HR or policy assistants, documentation Q&A, internal knowledge retrieval.

- Data changes daily or weekly; real-time materialization doesn’t add value.

- HR or policy assistants, documentation Q&A, internal knowledge retrieval.

- Single-Entity Context with Simple Tools

- If the agent only needs to fetch one or two data points (e.g., user profile + recent ticket), direct API calls via MCP are simpler and cheaper.

- If the agent only needs to fetch one or two data points (e.g., user profile + recent ticket), direct API calls via MCP are simpler and cheaper.

- Prototype or Exploration Phase

- When the agent’s scope is narrow or evolving, tool-based context lets teams iterate faster before committing to schema and stream design.

- When the agent’s scope is narrow or evolving, tool-based context lets teams iterate faster before committing to schema and stream design.

- Non-Critical Latency or Tolerance for Slight Staleness

- If being a few minutes behind doesn’t affect accuracy or UX, dynamic context assembly at runtime is fine.

The Recommendation

Use DeltaStream (RTCE) when:

- The agent’s output depends on continuously changing data.

- Latency matters — responses must reflect live state.

- Multiple agents must share the same view of truth.

- You need context fusion across many asynchronous systems.

- Auditability and governance are important.

→ Think: Fraud detection, real-time trading, customer ops dashboards, IoT control, supply-chain agents.

Skip or defer RTCE when:

- Data is slow-moving and consistency is not critical.

- Agents interact with few, stable data sources.

- Latency is measured in seconds or minutes, not milliseconds.

- You’re prototyping or exploring agent behavior.

→ Think: Knowledge assistants, internal copilots, research summarizers.

The Hybrid Future

The most powerful architectures will blend both models:

- DeltaStream maintains the real-time fused state.

- MCP tools fetch or enrich secondary context as needed.

- The agent reasons over both — live state + deep knowledge — for a complete, correct, and current worldview.

TL;DR

Fetch-on-demand = simple, flexible, but slower and inconsistent.

Real-time context engine (DeltaStream) = complex to set up, but essential when speed, consistency, and accuracy matter.

When your agents must react, not just reason —

when correctness depends on the latest truth —

that’s when DeltaStream stops being optional and becomes foundational.

This blog was written by the author with assistance from AI to help with outlining, drafting, or editing.